The

Cyborgization of Humanity

Ben Goertzel

February 25, 2002

Today we interface with computers primarily at arm’s length. Sure, the current standard keyboard-mouse-monitor set up is a damn sight more convenient than the line printers that we computing old-timers once used to communicate with lumbering mainframes. Touchscreens, drawing tablets, sound cards and MIDI musical instrument interfaces are awfully nice too. Direct computer interfaces to EEG and EKG machines save lives. Voice-based computer control will be great once the technology improves a bit.

But wouldn’t it be nice if the integration were tighter than all this? What if you could control your computer just by thinking? What if the PalmPilot in your pocket sensed the electrical flow in your brain? What if you could think “Yo, PalmPilot, what’s Mike Smith’s address??” and have the address appear in your mind? What if you could hear a melody in your head, and by the pure power of your thought cause that very same melody to emanate from your stereo speakers? What if you could envision a picture in your mind, transmit it to your PalmPilot, then beam it to your friend’s PalmPilot, and straight into their brain? What if you could connect your visual centers to an infrared camera, allowing you to actually see in the dark? What if a mathematician could reference a powerful computer algebra program like Mathematica, purely by power of thought – what kind of human-computer collaborative calculations could they do? What if you could reliably and directly sense your partner’s subtle emotional shifts and unspoken desires during the act of love? What if our brains could access virtual realities and zoom through them with freedoms and capabilities unknown here on Earth?

The power of brain-computer integration is obvious, and the danger at least equally so. What happens when the neurochip crashes? What about when some crazy hacker releases a virus into your mind? In the 2050 Olympics, will athletes whose reflexes are enhanced by brain-embedded chips be allowed to compete? In 2100, will bringing a directly-brain-linked PalmPilot-type device to a math test be considered cheating, or will it simply be expected?

The first steps toward the cyborgization of humanity are already well underway. Disabled people wear bionic limbs with onboard microprocessors; the severely paralyzed can communicate by using special brainwave detectors to control their computers. British computer scientist Kevin Warwick has embedded chips in himself and his wife that will allow them to directly sense each others’ emotions, by transmitting to one another the nervous system stimuli indicating emotional states. A Japanese firm sells chips intended to be embedded in old people – to enable a person to track their senile grandparent should they wander off. One step at a time, the boundary between human and computer gets thinner and thinner.

The Cyberlink System

The Cyberlink system,

and its application to enable communication by the severely disabled

http://www.brainfingers.com/pricing_and_ordering.htm

http://www.brainfingers.com/success.htm

The pioneer cyborgs of today are not computer geeks but rather disabled people. The wooden legs and Captain Hook style hands of ages past are replaced by bionic limbs, with embedded computers, directly patched into the body’s nerves for effective and responsive control. Cochlear implants, allowing the deaf to hear, get better and better each year. Scientists have constructed crude systems that pipe video camera output into the visual cortex – successors of these systems will allow blind people to see, and furthermore to see in the dark using infrared cameras, thus in some ways seeing better than the conventionally sighted. Today the TV show Star Trek: The Next Generation features a biologically-blind guy with a bionic super-eyepiece. In a few decades this sort of thing will be a reasonably common sight in the streets of New York.

A fascinating example of cyborg technology helping the disabled is the Cyberlink system, created by the Brainfingers Corporation (www.brainfingers.com). Cyberlink reads your eye movements, facial muscle movements, and forehead-expressed brain wave bio-potentials, and from a synthesis of these inputs it creates a signal to send to a computer. You don’t have to type or click to control your computer – you just have to think, or roll your eyes a little. A life-transforming device for those whom injury or congenital defect has deprived of the normal power of bodily motion. And a first step toward a new way of interacting with computers for all of us.

The forehead is a surprisingly outstanding place to gather biosignals. All you need is a simple headband with three plastic sensors on it. The headband connects to a

Cyberlink interface box, containing a signal amplifier and some signal processing software, which in turn plugs into a standard PC serial port. After some practice, you can figure out how to send commands to the computer, using a combination of eye movements, facial muscle movements, and thoughts.

It’s the combination of inputs that makes Cyberlink so powerful, as compared to competing products. For quite some time researchers have been playing around with using EEG waves to control computers. But the skull is thick, and the electromagnetic waves that come through it are diffuse and hard to interpret. They represent averages of brain activity over broad regions. If it were possible to measure the electrical potential of an individual neuron in the brain, then incredibly fine-grained directly-thought-powered computer control would be possible. But this isn’t feasible yet, and so combining EEG with other sources of information is currently the best way to go.

Newbie Cyberlink users generally get started with subtle tensing and relaxing of the forehead, eye and jaw muscles. With more experience, a shift is made to using predominantly brain-based signals. Often mental and eye-movement signals are used to move a cursor around, and facial muscle activity is used to turn switches on and off. A US Air Force study showed that subjects’ reaction times to visual stimuli were 15% faster with Cyberlink than with a manual button. For people without reliable muscle control, after a little more effort purely thought-based signals can be used to control every aspect of a computer’s behavior.

The process of learning to use the system isn’t always easy. For the completely paralyzed, it can sometimes take months. On the other hand, once success is achieved, the results can be transformational and amazing. It’s an awkward, clunky way to manipulate a computer, but it’s an infinite improvement on not being able to communicate at all.

A more frivolous application of the Cyberlink was created by Dr. Andrew Junker, the originator of the Cyberlink System, together with composer Chris Berg. It allows you to create music through the power of thought. Volume, pitch and velocity can be controlled, along with other factors such as the degree of melodic and rhythmic complexity of the sounds produced. Yes, it’s still a long way from the point where you can compose a new symphony in your head, and at the same time, miraculously hear it coming out of your computer speaker. But we’re at the first step along this tremendously exciting path. The same technology that allows the fully paralyzed to communicate, will increasingly allow us to communicate the sounds, sights, feelings and thoughts that are trapped inside us.

The Cyborg Professor

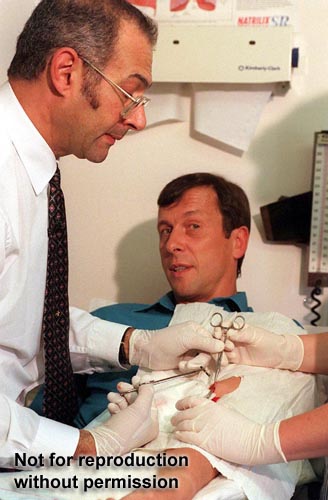

Kevin Warwick receiving a cybernetic implant

Medical work helping the disabled is arguably the contemporary vanguard of cyborgization … but one British computer science researcher is making a play to gain this title for himself. Dr. Kevin Warwick, who titled his recent autobiography I, Cyborg, has embarked upon a radical and ambitious program of experimental self-cyborgization. The scientific techniques he’s using are not particularly innovative, but his willingness to use himself as a guinea pig has made the headlines more than once; and who knows, his gutsy self-modificatory research may yet lead to breakthroughs.

Warwick’s first self-cyborgization experiment, in 1998, was mild in terms of the potential scope of human-computer synthesis, but nonetheless radical with respect to contemporary human experience. What he did was to turn himself into a human remote control. He had a tiny glass container full of transponders implanted into the skin of his arm, and had controls set up so that when he walked through doorways, the lights would turn on; when he walked into his study, his computer would boot up; and so forth.

Pragmatically, there’s not so much difference between what he did here and simply carrying an advanced remote control in one’s pocket, or strapped around one’s waist. But there’s a major philosophical difference. The controller was in his body, it was a part of him, not something he was carrying around and could pick up or put down.

His musings on the experience seem rather more dramatic than what was actually accomplished. “After a few days,” he said, “I started to feel quite a closeness to the computer, which was very strange. When you are linking your brain up like that, you change who you are. You do become a 'borg. You are not just a human linked with technology; you are something different and your values and judgment will change."

"I didn't feel like Big Brother was watching, probably because I benefited from the implant: The doors opened and lights came on, rather than doors closing and lights turning off. It does make me feel that Orwell was probably right about the Big Brother issue -- we'll just go headlong into it; it won't be something we'll see as a being negative because there will be lots of positives in it for us."

His next experiment, however, seems significantly more interesting. As I write these words in February 2002, the new operation is just now taking place. Check www.kevinwarwick.com to see the results. This time it’s no mere implant in the arm, it’s a genuine connection his nervous system. He’ll receive a tiny collar, circling around the bundle of nerve fibers at the top of his arm, which will read the signals from his nervous system and send them to the computer. This isn’t all that different from what happens when a bionic leg is connected up to a disabled person. But the purpose of the experiment is different.

Part of it is simple: he’ll test whether, by waving his arms appropriately, he can control his computer in various ways. A kind of extension of the Cyberlink methodology to the whole body – but without anything like the Cyberlink’s special forehead device; the control entirely from under the skin. “In the very near future,” he says, “we should be able to operate computers without the need for a keyboard or a computer mouse. It should be simply possible to type on your computer just by writing with your finger in the air."

And the implant will also receive signals from the computer. So he can shake his hand around, “save” the corresponding electrical signals on the computer, and then later play back the signals into his arm. And what happens then? Will his arm shake around involuntarily? What will happen to the computer-generated signals, when they voyage back up to his brain?

The aspect of the experiment that has attracted the most media attention involves his wife, Irina, who has agreed to receive an identical implant – assuming Kevin’s initial experience with the implant goes OK. Man and wife will then directly share electricity, nervous system to nervous system. If he feels angry and tensed up, and passes the corresponding electrical signals to her, will she feel the same thing?

One British tabloid labeled this new implant a "love chip" – the kind of publicity that Warwick seems to love, but causes grimaces on the faces of bionics researchers and others working in more traditional cyborg-tech domains. But, media hound though he indisputably is, Warwick is still a scientist at heart, and so he had to correct the love-chip idea. “Emotions such as anger, shock and excitement can be investigated because distinct signals are apparent,” he clarified. “[M]ore obtuse emotions such as love we will not be tackling directly.”

Not yet, at any rate. Not with computer sensors hooked up to the arm. To master love you may need the holy grail of cyborgization -- the fabled cranial jack, the two-way link from the computer to the brain.

In any case, he makes no bones about what he sees for the future. When asked who’s going to be running the show in 2100, his reply was: first AI programs, then cyborgs, then humans.

But as usual with advanced technology, the scary aspects are offset by the humanitarian aspects, which go far beyond assisting the disabled. “What,” Warwick asks, “if we could develop signals to counteract pain?”

Or, perhaps, deliver pleasure? A lucrative future business to say the least.

The Rise of the Cyborgs

Cyborgization now is primitive. Just like airplanes were in the time of the Wright Brothers, when various pundits declared: “Sure, you’ve made a plane fly, but you’ll never make one fly as fast as a car can drive.” But neither neuroscience nor computer technology and microminiaturization show any sign of slowing down. Rather, they’re speeding up. As cyborg technology advances, more and more techno-conservatives will rise up in arms with ethical complaints. But who can really complain about giving sight to the blind, giving hearing to the deaf, creating bionic arms and legs, giving communication skills to the horribly disabled who can’t communicate in any other way, allowing paraplegics or quadriplegics to control their bodies again? The research will get done because of the tremendous humanitarian applications. And then the Kevin Warwicks of the world – more and more of them as time goes on, adults who as kids spent endless hours watching cyborgs on Cartoon Network – will put the technology to use in other ways, ways that will make the techno-conservatives very uncomfortable.

There seems little doubt that eventually nearly every human being will be linked in directly with one or more electronic devices. And if the interfacing isn’t as smooth as one would like, may this not eventually be solved by genetic engineering? Why not tailor a new generation of humans for easier human-computer integration? Why keep operating on people to insert chips into their brains, when you can genetically engineer people who have nice handy chip ports on the backs of their head? If some people have trouble harmonizing their thought processes with the calculational processes of their digital symbiotic partner, then what’s the solution? You can improve the digital half of the equation, but why not also the human half? Human thought processes can be genetically re-engineered to make more natural use of onboard calculators, computers, and petabyte in-brain memory modules.

Oh brave new world, that has such cyborgs in it?